After having been in the talks for quite a while, Google finally unveiled its new, substantially expanded API interface a couple of weeks ago. It allows access to data from the Google Search Console (the late Google Webmaster Tools). Via this interface, it is now possible for the first time to access the data relating to one’s own domain automatically. Particularly, it is now possible to obtain data from the interesting area of search analysis. Over the past few weeks, we integrated this data into the Toolbox and learned a couple of things about Google Data. In this blog post, we want to tell you what to share what we discovered about the data, its uses and its limits.

Scope of the Search Console data

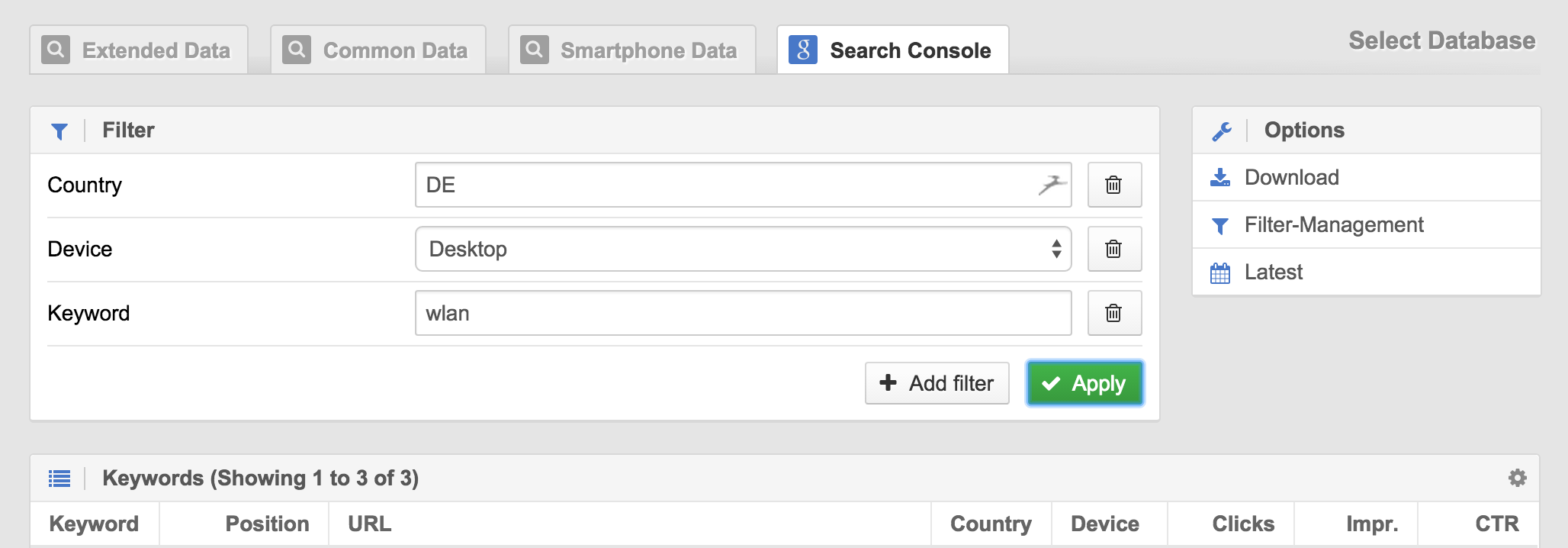

For each dataset, made up of keyword, URL, country and Device (desktop, smartphone or tablet), Google measures the average ranking, the number of impressions (i.e., how often this combination appeared), clicks (how often the URL was clicked on), and the clickthrough rate (CTR) resulting from the combination of the last two. Compared to the old referrer data (notprovided), the impressions add a new, useful parameter. The problem however, is that Google limits the number of results shown on the API to a maximum of 5,000 lines. Especially for bigger websites that regularly exceed this number, this means that only part of the data will be visible. To give you an idea to find what this means, with our sistrix.de, we hover around this number, some days above it, some others below it. A way to work around this limitation, is to input parts of the website into the Search Console separately, as individual properties.

User behavior influences the results

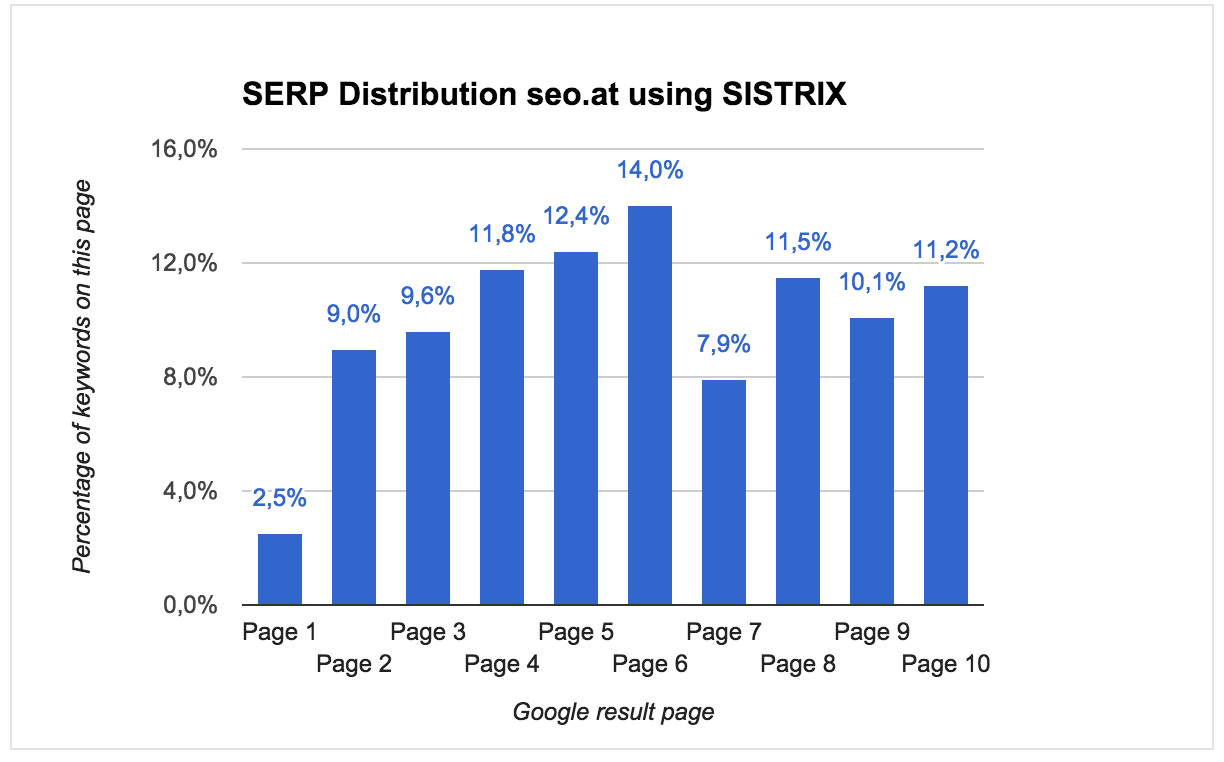

As a matter of principle, the Search Console only delivers data when a given minimum number (unfortunately, Google does not specify the exact quantity) of impressions has occurred. The consequences of this are best shown through an example. Here you can see the SERP distribution of seo.at based on Toolbox data:

For the entire keyword set, we alway determine the first 100 ranking positions and calculate on which Google results page a domain can be found. As can be seen, only 2.5% of this domain’s rankings are to be found on the first Google page. Unfortunately, this is quite a common problem among WordPress blogs with quickly changing themes. If we were SEO instead of a software company, it would be apparent that something has gone wrong and the first step in analyzing the problem would have already been taken. Now we take a look at the same evaluation based on Search Console data:

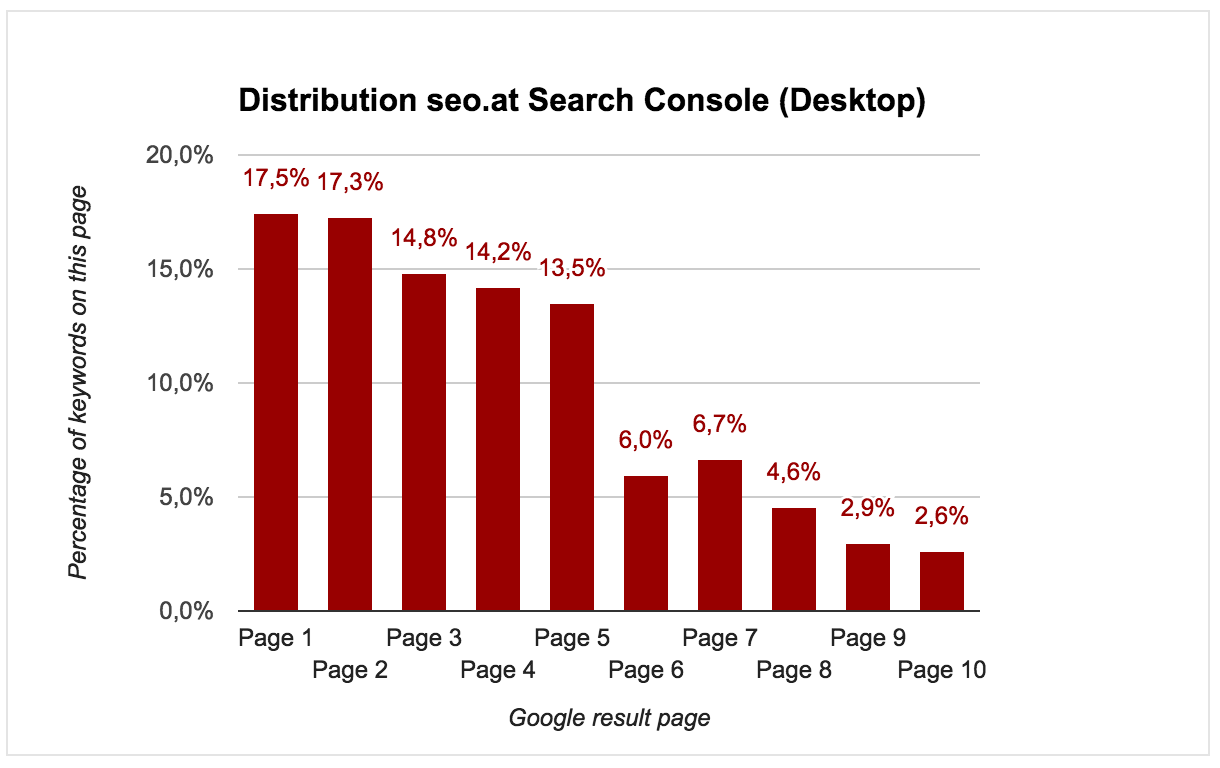

Here we get a completely different picture: based on this evaluation, most of the domain’s keywords already rank on the first page. So that means that everything is OK and that we needn’t worry, right? Wrong. The problem lies in the way the Search Console collects the data. In the data, Google only shows results that received an undetermined number of impressions from users; i.e., the results depend on how the site’s visitors behave in the Google results page. This becomes even more obvious when one takes a look at the smartphone data instead:

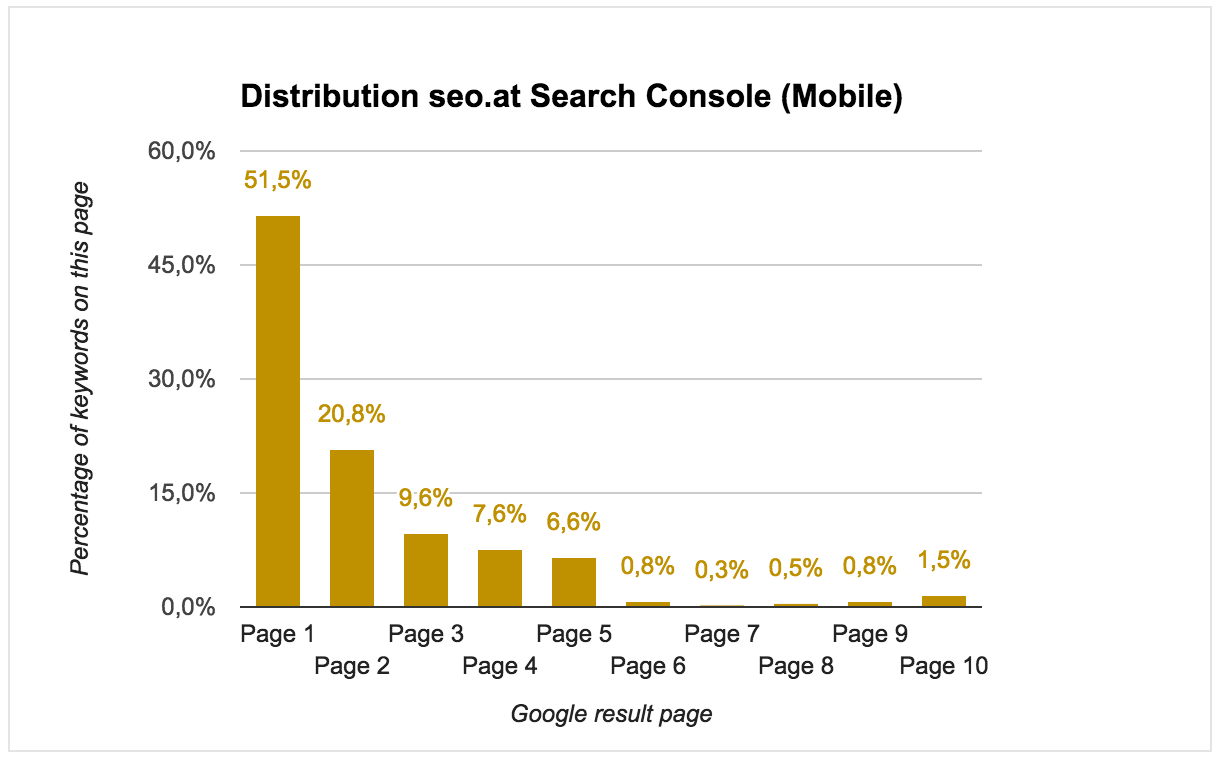

Since – because of the small screen – mobile phone users are even less prone to click on other results pages, the deviation is even easier to spot. Based on this data, one would assume that more than 50% of all mobile rankings for a given domain occur in the first results page. Unfortunately, this inherent problem of the Search Console does not just show itself when looking at different devices. Also between navigational and informational Search Queries, are large differences dictated by user behavior to be spotted. And don’t even get me started on countries, time, and other variables…

Conclusion

With its new API interface, Google gives us once again access to the data we lost with the demise of referrer information. The additional information regarding impressions is very helpful and useful. Unfortunately, larger websites will experience some issues when exporting their data. Moreover, the data is not a viable base for many analytical SEO evaluations and can lead to fundamentally flawed conclusions.