A few weeks ago, I saw a great “Whiteboard Friday” episode by Rand Fishkin, which we agree with 100%. I too think that Google is all about delivering the best answer for the user, and for this, Google needs user behaviour data in order to decide which search result deserves the top spot, the second position and so forth.

Even at times when Google does not have enough data from the SEPRs, for example because the URL is too new, it is quite possible that Google will use the data they have for the entire domain to estimate the trustworthiness of the new URL.

Let me give you 3 examples:

(1) EURO and EURO

Usually, if a user searches for the keyword EURO, they are trying to find information related to the currency of the European Union (rates, currency converters, the history of the Euro, etc.). All throughout the year, the search results are therefore about the EURO currency. Now, once every 4 years, the users in the United Kingdom or Germany, that use EURO as acronym for European Football Championship, do not want to know anything about the EURO currency but they are interested instead in Football.

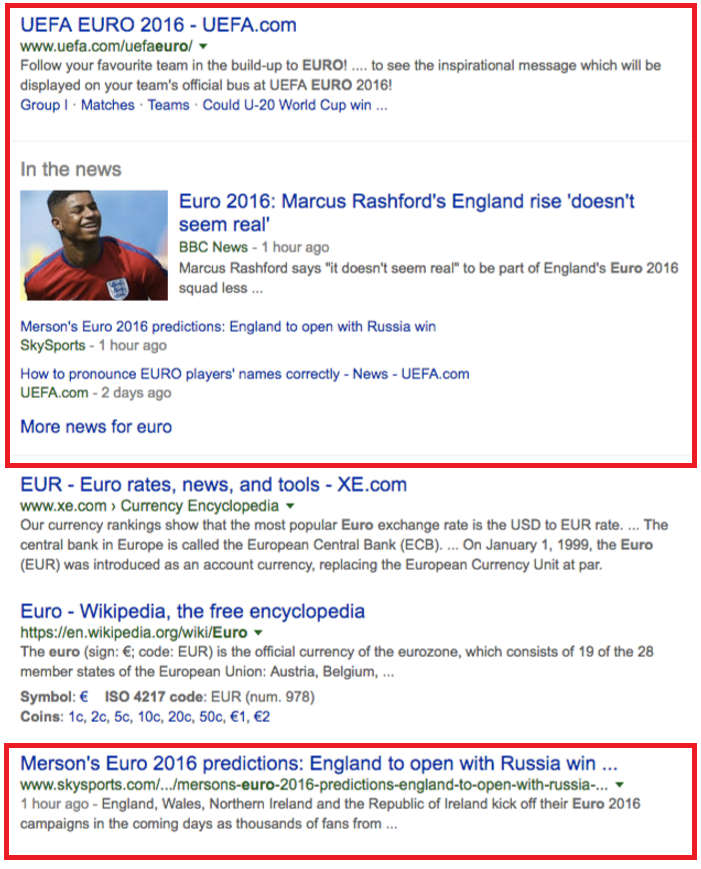

Google knows this and can resolve the user-intend-dilemma very well by using data they collected in the past. The question that remains is, “which domain should now rank on top for the keyword EURO”? The one that delivers the best answer for the user, and Google can trust in this matter:

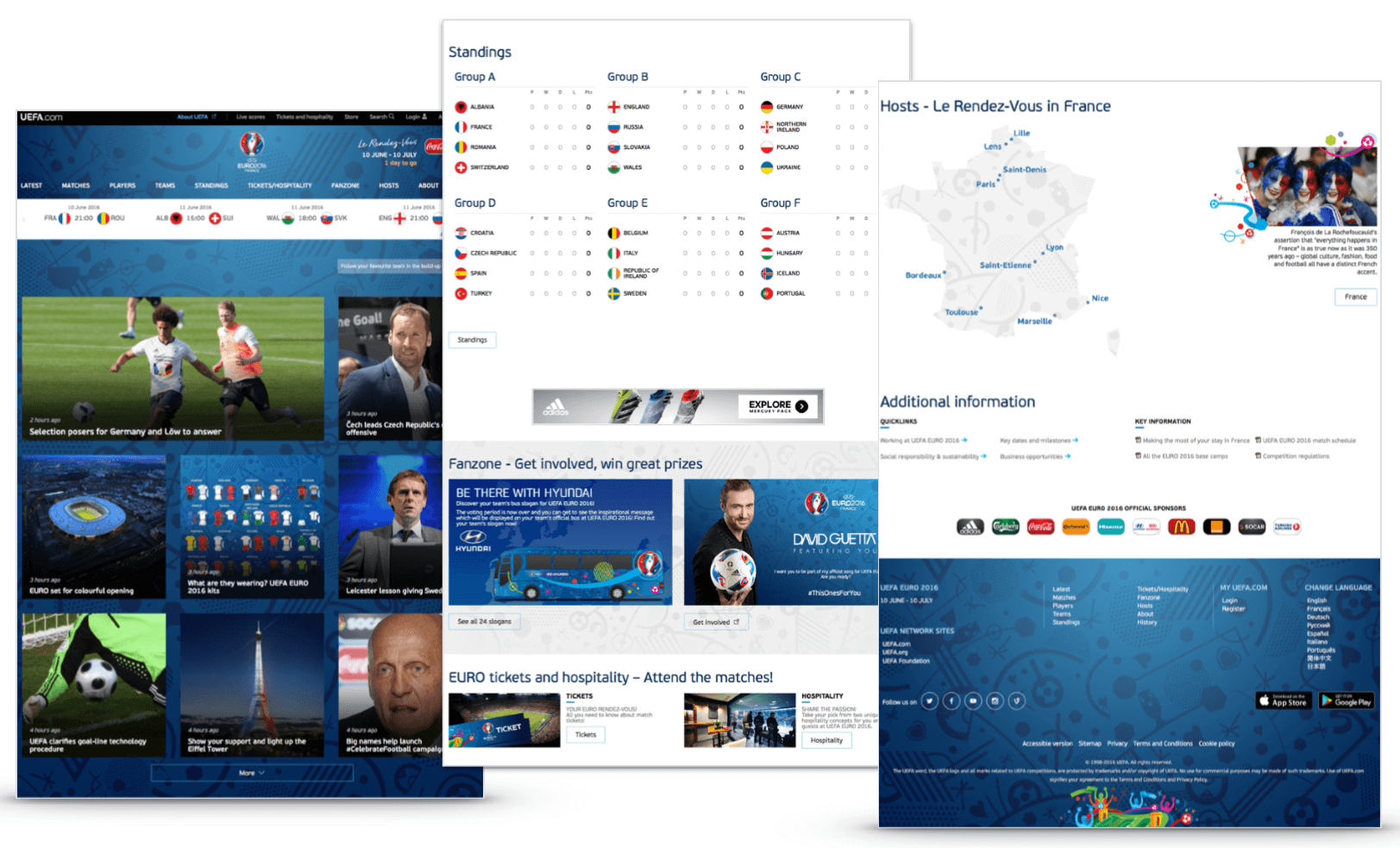

In this case, that would be the uefa.com domain – followed by a news vertical and skysports.com in fifth position.

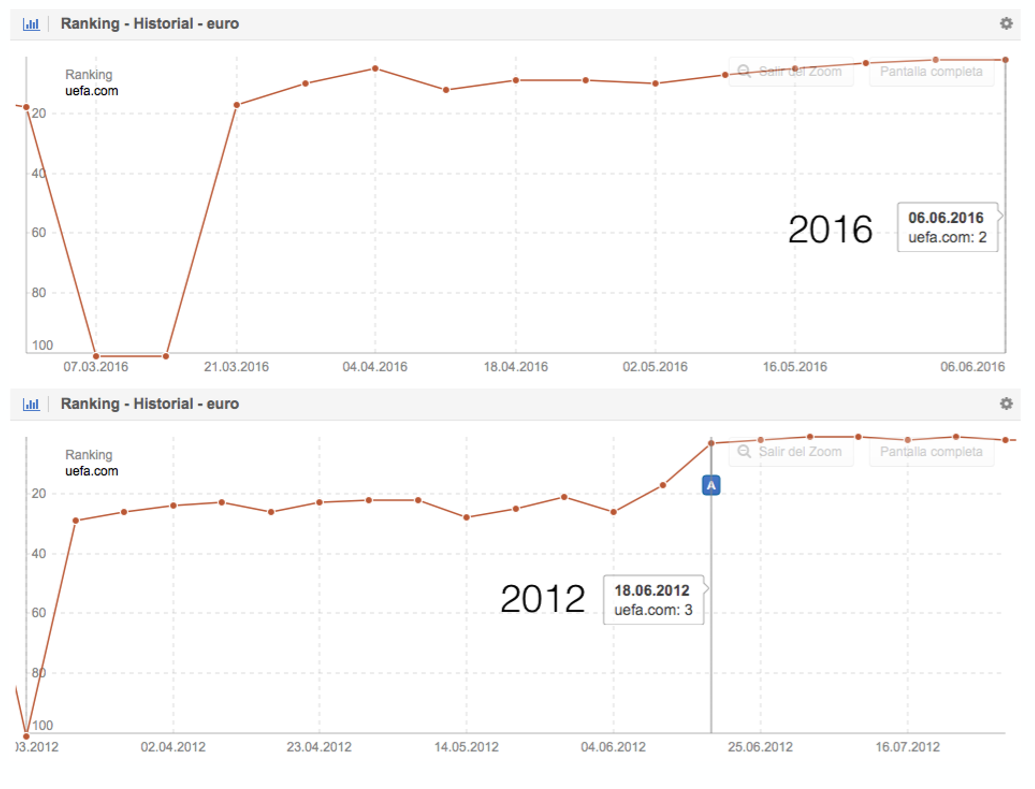

Next we have the ranking history for the UEFA’s website for the keyword “Euro“, now and 4 years ago (on Google.co.uk):

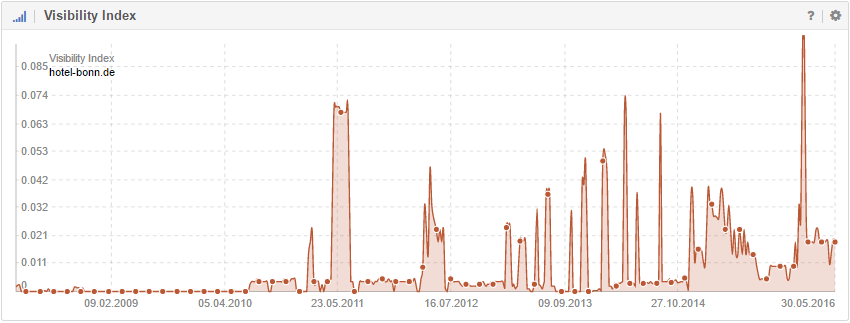

(2) Hotel Bonn

Bonn was the interim-capital of Germany from 1949 to 1990 and is the city where Beethoven was born. A domain that you might expect to have something to do with Bonn is Hotel-Bonn.de. But here we have a hotel that is operated by the family Bonn, in another Federal State. The next chart shows the Visibility Index history for Hotel-Bonn.de on Google.de, which nicely shows what may happen if Google sees a discrepancy between what they think is a great result for a specific search and the user behaviour they measure for said result:

For any user looking for a hotel in Bonn City, this domain might seem like the best choice. So, when Google shows Hotel-Bonn.de for the search request “hotel bonn” on the first position and people follow the link, they will return to Google’s search results to check out the next result. Google notices these “short clicks” for Hotel-Bonn.de and will lower the ranking for the domain, but still keep it in the Top-10, in case users really are looking for the Hotel of the Bonn family.

After a while, Google will, once again, try out the domain at a higher ranking position, which makes the entire story come full circle.

(3) Lena hits Wikipedia’s search results

On May 2010, the German singer Lena Meyer-Landrut won the European Eurovision Song Contest – as far as I know, the British viewers were not really happy about this result at all :) – however, she was not really popular in Germany before the contest. She was a “new face” in this segment.

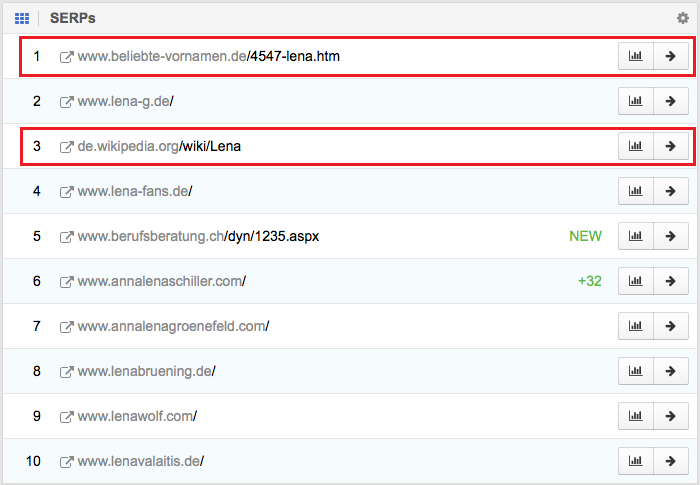

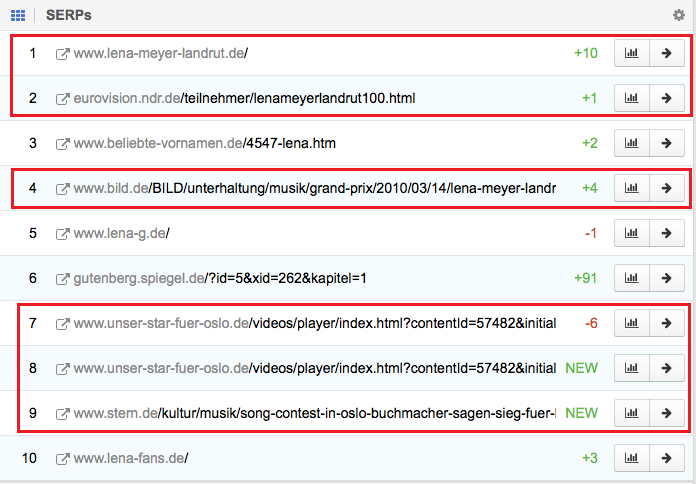

So, a few months before the European Song Contest, the Top-10 search results for the keyword “Lena” showed websites that had nothing to do with the singer “Lena”, such as the Wikipedia result for https://de.wikipedia.org/wiki/Lena:

One month before the European Song Contest, Google radically changed the Top-10 and has keep this change on Google.de:

I am pretty sure that the previous Wikipedia result suddenly had lower-than-expected CTR values and a high amount of “Short Clicks”. The result pretty much dropped from the SERPs and now ranks on position 98. This shows that it does not matter how large or important your site may be, or how many links you have. If you cannot deliver the best answer for what users expect, they will let Google know.

Conclusion

It is much more important to concentrate your efforts on avoiding “Short Clicks”, rather than working only on some technical aspects of your site. As we saw for the keyword “Euro”, you can have a very good website, as far as the technical aspects are concerned, but if you are not delivering what the users are looking for, Google will find websites that do. This is even true for Wikipedia, as we saw with the results for “Lena”.

Please do not believe in myths that tell you that your content needs at least 300 words and a keyword-density of 3%. This has not only been refuted by Google’s John Mueller time and time again, but we can actually see this in action right now, on the UEFA website. They neither worry about text length, nor about keyword density. Some of the best ranking pages are the results table and the location map:

Please also make sure you trust Google, when they tell us that they are not using dwell time nor bounce rate for the rankings – the last time this happened was in March 2016, as Barry Schwartz explains. Just try to make Google’s objectives your own – deliver the best answer possible for what your visitors are looking for – and I believe you will become more successful.

I hope you like it.