The big Google updates of recent times have led to a rethink by many SEOs: It’s no no longer just about individual and clearly identifiable factors. Increasingly its the user behaviour in response to the user experience that determines the success of Google. What it is, whether it’s a ranking factor and, importantly, what Google says, is covered in this blog post.

- Artificial Intelligence vs Machine Learning

- Training data vs. ranking factor

- User Experience as a ranking factor

- UX Playbooks. Learn from Google.

- The Internet takes place on the smartphone.

- Good user experience lives by standards

- Google pursues its own interests.

- How can I measure user experience?

- Summary

In not-too-distant Google history, updates to the search algorithm had a clear goal: be it the quality of links (Penguin), of texts (Panda) or other updates – shortly after implementing each update it was clear what goals Google tracked. It has been different for some time now: the algorithm changes recently combined under the code name “Core Update” have caused massive ranking changes. But until today there is no common denominator for the affected domains.

In 2010 Eric Schmidt took on the company motto – “Mobile First.” The new CEO Sundar Pichai turned “Mobile First” an “AI First” 3 years ago. Here’s a short backgrounder.

Artificial Intelligence vs Machine Learning

While popular media likes to write about artificial intelligence, the term machine learning (ML) is much more relevant to what Google is currently doing. Essentially, this involves the following: you show a computer a (very) large amount of already classified training data and let it learn from this data independently.

For example, Google Web Search might include pages marked as spam by the Google Quality Raters. The computer can then learn from the data, which features regularly occur on spam pages and which do not.

Where the creation of these algorithms used to be the task of humans, the machine learning takes over. The human being must still provide the training data and monitor the process – but the computer writes the algorithm based on the findings “learned” from the training data. The result is an algorithm that checks pages that are unknown to it and states, in percentage terms, what the spam probability of the checked document is.

Training data vs. ranking factor

The right training data is clearly the foundation for the right ML algorithms. But ask Google if user data affects the rankings, the answer is a repeated and consistent: “no“.

Nobody really takes this answer from Google on-board but with the background info on machine learning mentioned in the last paragraph, it makes sense: Google trains its ML algorithms (of course) with user data. However, the result from this training is used as the ranking factor. It’s the machine-building algorithm and not the user behaviour itself. Thus, Google’s answer is correct; and yet despite that, the user behaviour does actually flow into the Google Ranking process.

User Experience as a ranking factor

With that information, it should be clear that a good user experience (UX) leads to more satisfied users and ultimately, to a better ranking. Just because there is no direct and clear connection between user experience and ranking, Google’s machine learning approach looks for features that indicate a positive user experience. As a result, it’s important to make your page the same as the pages with the best user experience.

UX Playbooks. Learn from Google.

Google is not just an algorithmic team. It’s many departments that want to help website owners (and help with Google’s quarterly goals? Or is it the other way around?) That’s why Google has created a number of “UX Playbooks”. These PDFs describe in detail what Google’s view is good user experience and which pages are leading the way. I am not sure if Google has published this somewhere but at least the PDFs are publicly deposited on Google’s web server and also indexed in the Google search. There are currently the following playbooks available;

- UX Playbook Cars / Auto

- UX Playbook Content / News

- UX Playbook Finance

- UX Playbook Healthcare

- UX Playbook LeadGen

- UX Playbook Real Estate

- UX Playbook Retail

- UX Playbook Travel

In order not to overload Google’s bandwidth, we recommend reading your own local copy of the PDFs – and you definitely should. They are similar to the Search Quality Rater Guidelines, only much more pleasant to read. They provide a glimpse into Google’s dream world. Here are some of the focal points of the PDFs.

The Internet takes place on the smartphone.

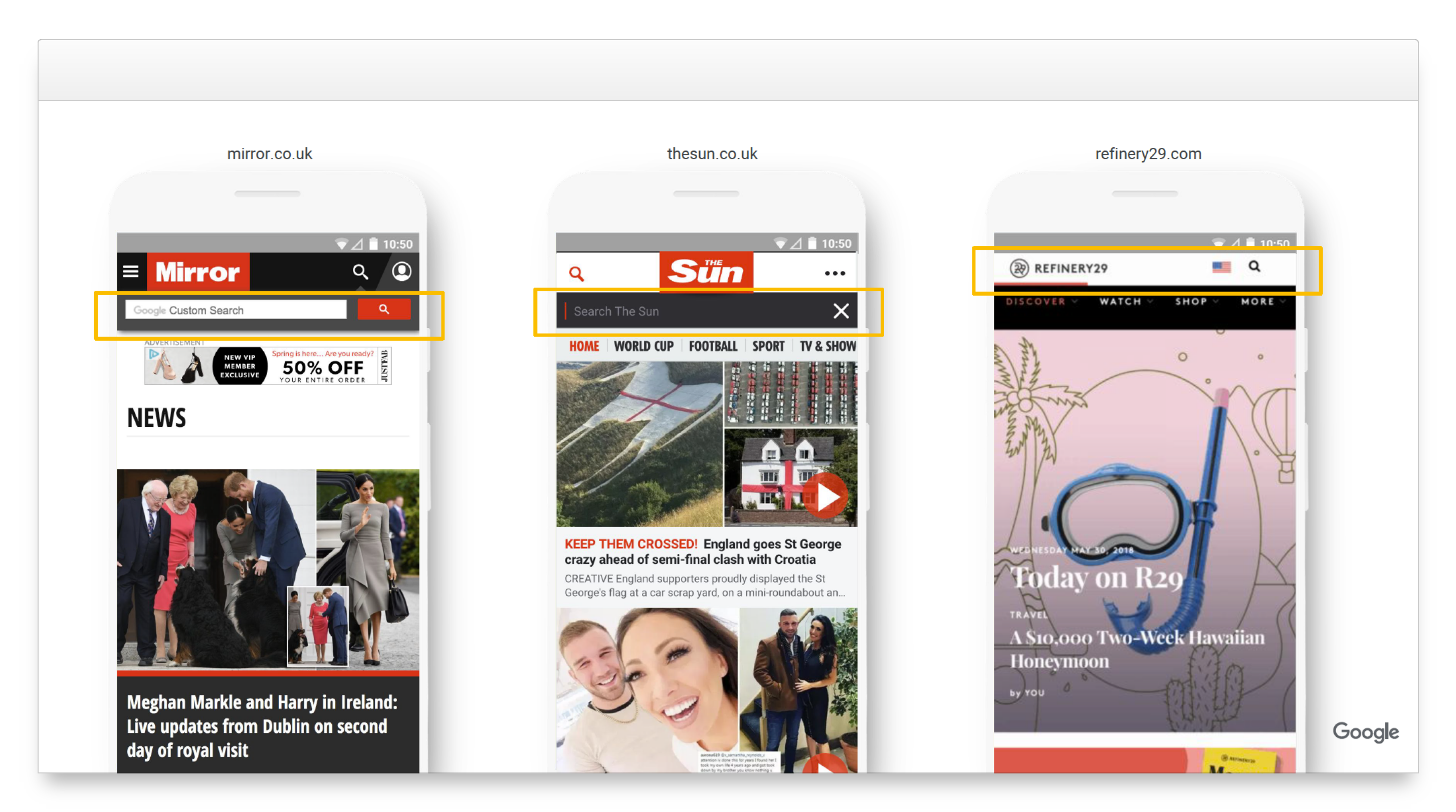

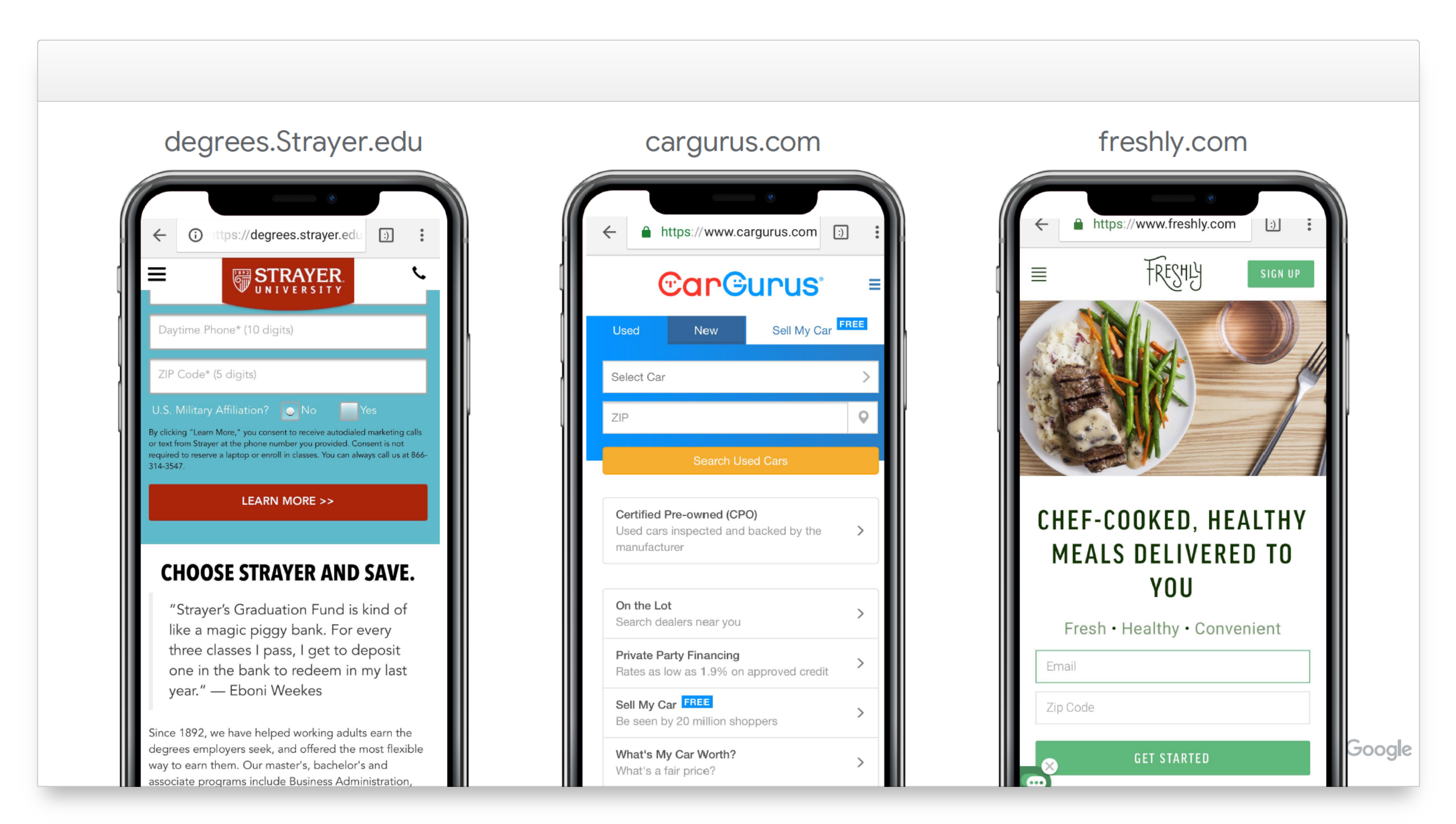

We, the people who deal with the Internet on a daily basis, usually work on very large monitors, or at least with laptops. But the reality is different: in almost every country in the world, more than half of all searches are made via the smartphone. Google takes that into account in the playbooks – every screenshot in these PDFs shows the smartphone version of the website, not the desktop version.

Good user experience lives by standards

If you look at the best-in-class examples from the playbooks one after the other, it quickly becomes apparent that it’s not creativity or fancy UX that works. Most examples are similar in construction and layout – with only company colours and logos that are often different. The user has well-established expectations for the functioning of websites and would like to see them fulfilled.

Google pursues its own interests.

The comments on the necessity of AMP clearly show that Google pursues its own interests and is willing to enforce these “irregularities” in its communication. Of course websites can be delivered extremely fast without AMP – but this option is not mentioned. In all statements made by Google it raises the question of which are in the interests of Google and what value you can attach to the recommendations of Google based on that.

How can I measure user experience?

As the inventor of the Visibility Index, we are a somewhat proud of our contribution to making SEO success measurable and comparable. It is, however, important to consider which key figures are important for the, seemingly spongy topic of user experience. Luckily, the Google Playbooks also help us with this. For all suggestions, possible metrics are mentioned. If you compare and summarise them, this is what drops out:

- Time on Site: How long will the users stay on the site? In general, a higher time is better here. This metric suggests that the expectations of the user are met.

- Page Views: How many pages are viewed on a website? Again, more pageviews are usually better. Does the user find further information that interests him?

- Bounce Rate: In the case of search results, this is about so-called “pogo sticking” – if the user jumps from the result back to the search results and clicks on the next hit, this may indicate that he did not find what he was looking for first.

Summary

The user experience influences the Google ranking. While not used as an original ranking factor, it feeds into the Machine Learning process.

The expected user experience is now largely standardised and regularly seen by the user. There are many examples of successful UX (Best in Class) in Google’s UX Playbooks.

Since SEO success is increasingly coupled with the fulfilment of the search intention, successful SEO must go hand in hand with a successful user experience.

You can assess live data from all domains and grow your visibility with the Free SISTRIX Trial.