Prompt Research is the keyword research for AI search. The tool shows which topics users are searching for on AI platforms, and provides in-depth analyses per topic covering intent, customer journey, target audience, and specific prompts for each platform.

- The Solution: From Prompts to Topics

- How Prompt Research Works

- Topic Overview

- Topic-Detail

- Target Audience and Strategy

- User Prompts

- Search Volume in Prompt Research

- What Does the Search Volume Show?

- How Is the Search Volume Calculated?

- Methodological Premises

- Why Is There No Exact Search Volume for AI Search?

- Further Development

- What Is the Search Volume Suitable For?

- What Is It Not Suitable For?

The tool currently covers Germany. Data for additional countries and languages is being prepared.

In classic Google Search, demand could be measured clearly: keywords and search volumes showed what was being searched and how often. “Gas barbecue” has a measurable monthly search volume, and content can be planned around it.

In AI search, this no longer works. Users formulate individual prompts instead of entering standardised keywords. Rather than “gas barbecue”, one user might ask ChatGPT: “I’m looking for a gas barbecue with at least 3 burners for my roof terrace, ideally compact and with a side burner. Budget up to £500.” Another might ask AI Mode: “Compare the pros and cons of gas barbecues and charcoal barbecues for beginners.” And a third might ask AI Overviews: “Gas barbecue winter storage.”

The result: Many prompts are unique or occur only very rarely. Analysing individual prompts and building content strategies around them is not practicable.

The Solution: From Prompts to Topics

Prompt Research solves this problem through a layer of abstraction. Instead of analysing individual prompts, the tool groups similar prompts into topics. A topic brings together all prompts that deal with a subject and a goal with sufficient precision. The topic “Gas barbecue winter storage”, for example, contains all variants of user questions around storing and protecting a gas barbecue in winter, regardless of whether they are phrased briefly or in detail, and regardless of which platform they appear on.

This clustering makes AI search demand analysable: instead of millions of unique prompts, clearly defined topics with aggregated metrics are available. The foundation consists of over 62 million real user questions, grouped into over 1.4 million topics.

How Prompt Research Works

The starting point is the same as with classic keyword research: enter a keyword and start the search. Prompt Research searches the database both for the entered keyword and semantically for thematically related topics.

Topic Overview

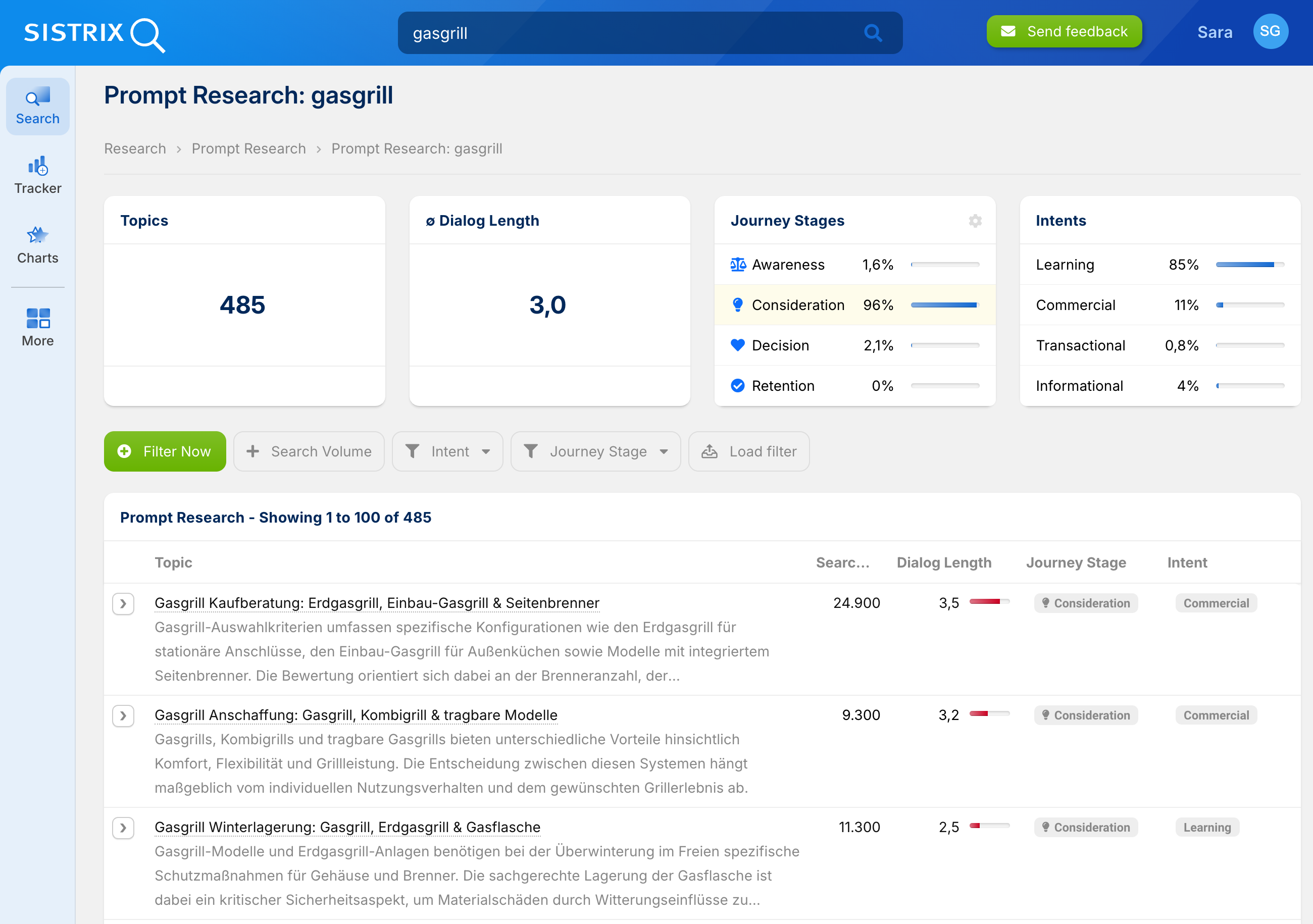

The result is an overview of all matching topics. For “gas barbecue”, the tool finds, for example, 485 topics, ranging from “Gas barbecue buying guide” and “Gas barbecue purchase: combination grills & portable models” to “Gas barbecue winter storage” and “Gas barbecue heat optimisation”. The topics cover the entire usage cycle: from the purchasing decision through to operation and maintenance.

The header summarises the aggregated values of all found topics: number of topics, average dialogue length, distribution across the customer journey phases, and distribution of intents.

The table below lists the individual topics with name, description, search volume, dialogue length, customer journey stage, and intent.

Filters help to narrow down the often large number of topics to those that are strategically relevant. In addition to the quick filters for intent and customer journey stage, custom filters can be created via Filter now. Filterable fields are: topic, search volume, dialogue length, next step, buyer persona, and emotional driver.

This allows you to narrow down not only classic metrics, but also to make targeted use of the psychological and strategic data points:

- Next step contains ‘buy’ finds topics where the logical next step is a purchase. Ideal for isolating commercially relevant topics.

- Buyer persona contains ‘beginner’ finds topics whose target audience are newcomers. Useful for planning introductory content in a targeted way.

- Emotional driver contains ‘fear’ finds topics where uncertainty or concern is driving the search. These topics benefit from trust-building content.

- Dialogue length > 4 finds particularly advice-intensive topics with a high need for explanation.

Filters can be combined and saved.

Topic-Detail

Clicking on a topic opens the detail view with all available data points. The information is structured so that it can serve directly as a briefing for content creation or for optimising existing content.

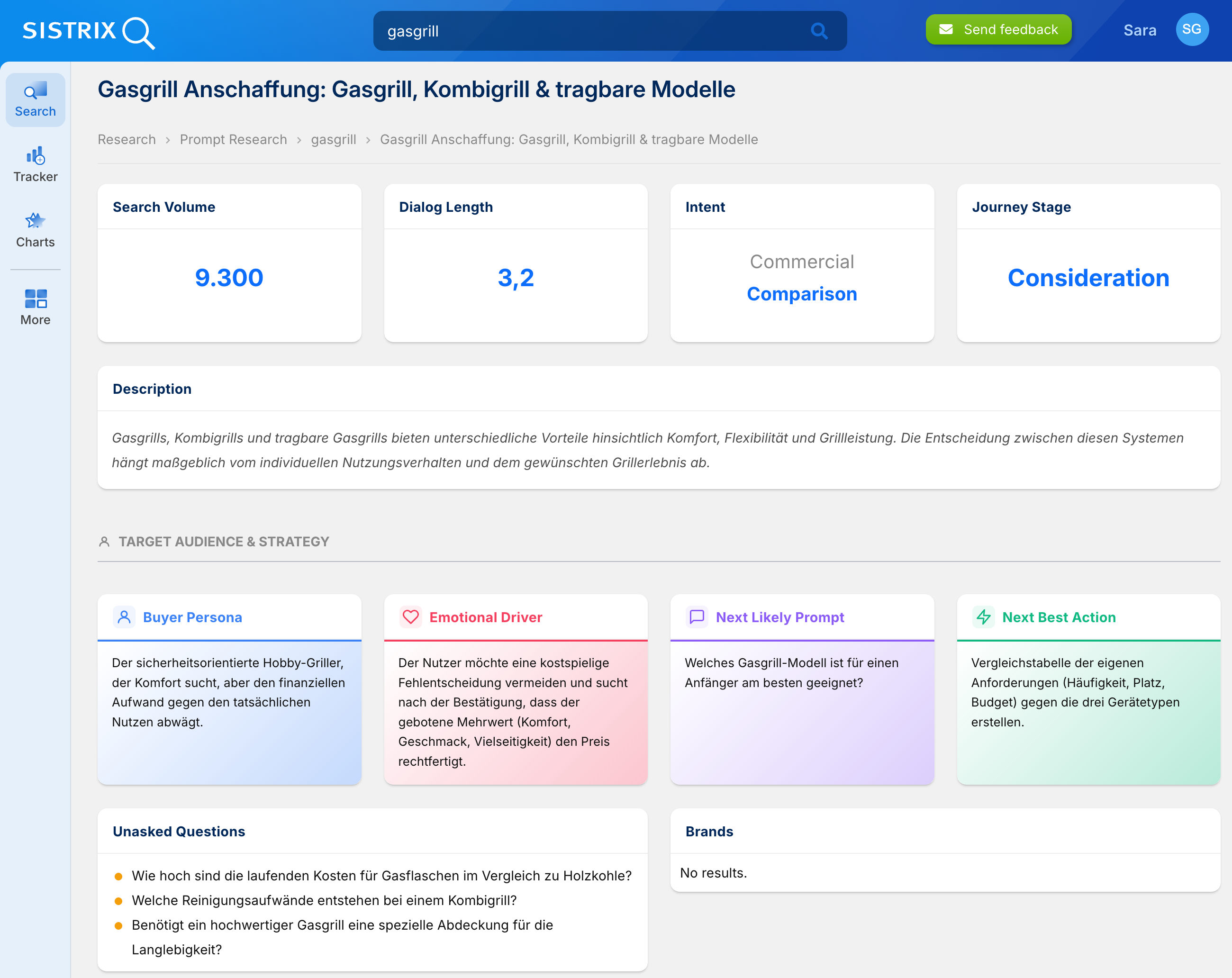

At the top are the four key metrics: search volume, dialogue length, intent, and customer journey stage. Together they provide a quick overview: How large is the topic, how complex is it, what does the user want, and where do they stand in their decision-making process?

The dialogue length is particularly relevant for content planning. A value of 1.5 (simple factual query) calls for a short, precise answer. A value of 3.5 (complex advisory conversation) indicates that the user has many questions and that in-depth content with multiple sections, comparisons, or decision-making aids is required.

Description: A brief, factual summary of the topic. It helps to quickly categorise a topic and distinguish it from similar-sounding topics.

Target Audience and Strategy

This section provides the briefing behind the numbers. Rather than simply knowing that a topic is “Commercial” and sits in the “Consideration” phase, it shows who is specifically searching and why.

The buyer persona describes the typical person behind the search. For “Gas barbecue purchase”, this is, for example, “the safety-conscious hobby griller who seeks comfort but weighs the financial outlay against the actual benefit.” This is directly actionable: the content should emphasise safety and convenience whilst also providing a clear cost-benefit argument.

The emotional driver shows what is truly motivating the search. Often this is not the obvious question, but a deeper underlying motivation. For “Gas barbecue purchase”, it is the fear of making a costly wrong decision. Content that addresses this fear (e.g. through a structured comparison or a clear recommendation) will perform better than a straightforward feature list.

The next user question shows where the journey is heading. If, after receiving an answer, the user’s next question is “Which gas barbecue model is best suited for a beginner?”, then the content should anticipate this question or link directly to it. This keeps the user within your own content ecosystem rather than returning to the AI to ask again.

The next step identifies the logical action following the information phase. This is often a purchase, but can also be a sign-up, a download, or further research. For the content strategy, this value indicates which conversion goal is realistic: when the next step is “creating a comparison table of one’s own requirements against the three device types”, it is clear that the user is not yet ready to buy, but rather needs comparison content.

Unasked questions are questions the user has not asked, but should be aware of in order to make a better decision or avoid mistakes. For “Gas barbecue purchase”, for example: “What are the ongoing costs for gas canisters compared to charcoal?” or “Does a high-quality gas barbecue require a special cover for longevity?”

Unasked questions are often the best starting points for content that stands out from the competition. Whilst most content answers the obvious questions, content that addresses unasked questions demonstrates expertise and builds trust. In AI responses, precisely this depth is rewarded.

Brands shows the brands that are relevant to this topic. Not every topic has relevant brands. Where they are present, they help to assess which competitors are already present for a topic and against whom your own content must compete.

User Prompts

Under “User Prompts”, the tool displays prompts for each AI platform: AI Mode, ChatGPT, AI Overview, Gemini, Copilot, and Perplexity. The prompts differ by platform because users search differently on each platform.

On AI Overviews, prompts are short and keyword-oriented, comparable to classic Google search queries. On AI Mode, conversational research questions are posed, aimed at synthesis and comparison. On ChatGPT, prompts are considerably more detailed: with specific context, information about the user’s own situation, and particular requirements. On Gemini, the focus is on concise problem-solving. Copilot and Perplexity round out the picture with their respective platform-typical questioning patterns.

These differences are relevant for content optimisation. Content that is intended to be visible in AI Overviews must be optimised for short factual queries. Content aimed at ChatGPT visibility, on the other hand, must be able to answer complex, context-rich questions. The user prompts show concretely what type of questions are asked on which platform.

Search Volume in Prompt Research

What Does the Search Volume Show?

The search volume in Prompt Research is a cross-channel market indicator. It estimates the monthly demand for a topic across the relevant AI platforms: Google AI Overviews, ChatGPT, Gemini, and others.

The value answers the question: How great is the information need for this topic across the entire AI ecosystem? Instead of reporting incomplete individual values per platform, this consolidated value delivers the total potential. This allows topics to be prioritised strategically and relative demand to be assessed.

The search volume in Prompt Research is a standalone metric for the AI market. It measures estimated demand in AI search and is not directly comparable to classic Google search volume.

How Is the Search Volume Calculated?

The calculation model translates global usage data into concrete, topic-related market values. The calculation is carried out in four steps:

Step 1: Determining total volume and isolating search intent. First, we capture the global volume of AI platforms. ChatGPT processes more than 2.5 billion messages per day according to OpenAI; for other platforms we use comparable data or well-founded industry benchmarks. Since generative AI is also used for creative writing, programming, and other tasks without a research focus, we cleanse the data statistically so that the pure information and search need remains.

Step 2: Localisation and weighting through crawling data. Using the known shares of countries in global usage (visitor share), we break down the volume to the German market. For Google AI Overviews, we additionally use our own crawling infrastructure: we measure for which topics and search intents Google serves an AI Overview. These visibility data flow directly into the calculation as a weighting factor. (Note: Localisation data refers to Germany as the basis.)

Step 3: Distribution across individual topics. Since AI platforms do not publish demand data at topic level, we use real demand from classic Google Search as a proxy for distribution. The premise: a topic that generates a high information need in Google Search has a correspondingly high relevance in AI search as well. We use Google traffic as a distribution key to break down the previously determined total AI volume proportionally across individual topics.

Step 4: Rounding values. The results are slightly rounded to avoid creating a false sense of precision. A displayed value of 25,000 does not mean exactly 25,000 queries, but rather indicates the order of magnitude.

Methodological Premises

The search volume is a data-driven benchmark, not an exact measurement. Every statistical model is based on premises, which we disclose here:

Premise 1: Proportional information demand. We assume that the relative distribution of demand between topics in AI search is structurally similar to Google Search. If “descale coffee machine” generally generates a greater search need than “clean coffee machine”, the model reflects this ratio for AI search as well. Individual topics may deviate from this in practice. By grouping many individual prompts into a topic, statistical outliers are absorbed: individual prompts can fluctuate considerably in frequency, but at topic level these fluctuations even out. The more prompts a topic comprises, the more stable and comparable the value becomes.

Premise 2: Cross-platform average. The distribution between AI platforms is not reported individually per topic, but reflects the overall German market. (Note: Platform distribution data refers to Germany as the basis.) In practice, certain topics may be disproportionately popular on individual platforms (e.g. complex research queries on ChatGPT, quick local questions on AI Overviews).

Premise 3: Currency of the data basis. We draw on the most up-to-date available usage data from AI providers and third-party sources, and update the calculation basis on a regular basis.

Why Is There No Exact Search Volume for AI Search?

In classic Google Search, Google provides aggregated search volumes via the Keyword Planner. No comparable tool exists for AI search. No AI provider publishes demand data at the level of individual topics. Furthermore, there is no unified standard for what constitutes “a search”: a standalone AI Overview is something different from a single message within a longer ChatGPT dialogue.

An exact measurement is therefore not possible by the nature of the system. The search volume in Prompt Research is the best currently available data-driven approximation of the AI search market.

Further Development

The methodology is continuously being developed. As soon as AI platforms publish more data or new data sources become available, these will be incorporated into the model. The displayed values may therefore change over time, including retrospectively.

What Is the Search Volume Suitable For?

- Prioritising topics: The value shows which topics have high demand and which are less relevant. The relative order of magnitude is decisive for planning content measures.

- Comparing potential: In combination with dialogue length, the search volume shows where potential exists for in-depth content. High volume with high dialogue length points to a substantial need for explanation.

- Assessing markets: Using the aggregated values, the volume of entire topic areas in AI search can be estimated.

What Is It Not Suitable For?

- Direct comparisons with Google search volume: The metrics measure different ecosystems and are not directly comparable.

- Platform isolation: The volume shows the overall market. It is not suitable for analysing demand exclusively on ChatGPT or exclusively on Gemini.

- Up-to-the-minute trends: The model is based on monthly average values and does not reflect short-term fluctuations.