The “Projects” overview page lets you create new Onpage projects or to jump back into an existing project. This part of SISTRIX is extremely useful to check if your website has specific Onpage issues and to monitor an individual keyword set.

Your Onpage Projects

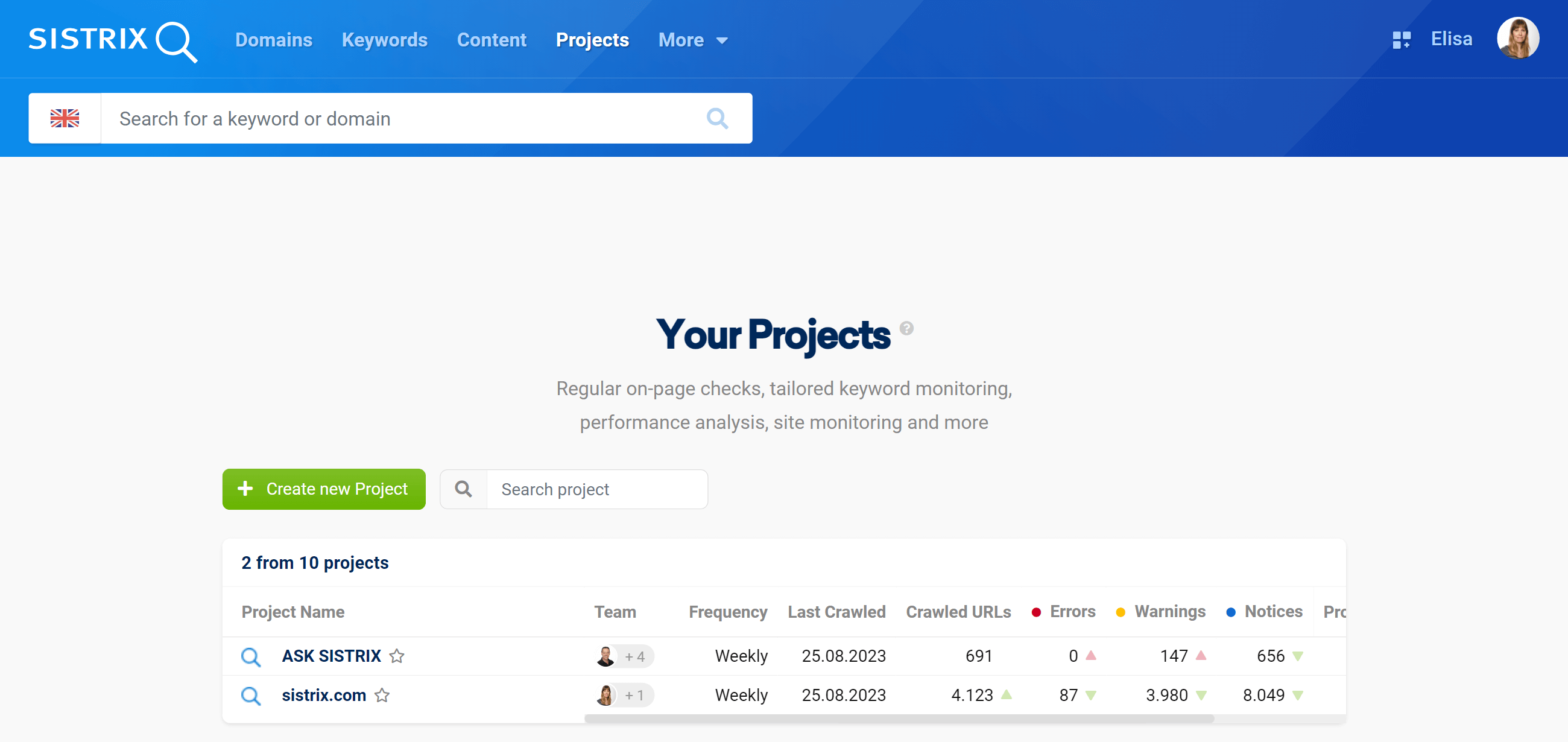

Here you’ll find the list of all your projects, shared projects as well as the remaining project slots:

- Project Name: The name used within SISTRIX to identify the Onpage project.

- Team: How many team members have access to the project.

- Frequency: How often the Onpage crawler is started automatically.

- Last Crawled: When the Onpage project was last crawled.

- Crawled URLs: Total number of URLs crawled for the project.

- Errors / Warnings / Notices: Total number of errors, warnings or notices found by our SISTRIX crawler for this project.

- Project VI: Value of the project Visibility Index.

- KWs ᐱ: Number of rankings that have improved or been newly gained since the last crawl.

- KWs ᐯ: Number of rankings that have worsened or been lost since the last crawl.

Click on the green button “Create new Project” to start a new analysis or select a project you’ve already created to see fresh data.

Select a project to see the data in more detail or to adjust the settings.

You can also add projects to your “Favourites” by clicking on the small star next to the project name. Once you have added domains or Onpage projects to your favourites, they will automatically be displayed in a special dashboard called “Favourites”. Learn more about this in this handbook.

Projects Shared With You

In the table you can also find the projects that other SISTRIX users have shared with you. According to the permissions selected by the admin, you’ll be able to modify the project shared, or to just view it.

If you want to share a project with a colleague or an external user, you just have to go to the project settings (section “Team”) and add name, surname and email address.

One-Time Page Checks

This feature allows you to immediately crawl a domain for Onpage issues. This does not create a project and is a one-time activity.

Simply type in the domain (or subdomain / directory) and our crawlers will do their best to evaluate the first 10,000 pages and give you an Onpage overview of the errors, warnings and notices. You can also see the URLs, links and resources of the domain and analyse them in detail.

You can only run a single one-time Onpage evaluation at any time, but there is no limit to the number of one-time projects you can create. Each crawl will be saved for 14 days.

Crawl History & Statistics

SERP Requests

A SERP request is defined as any update of the search results for a keyword that has been carried out for you as a user. The number of SERP requests varies depending on the package purchased.

This table provides an overview of the SERP requests that are available to you in the current billing period.

SERP requests included in the package: Total number of SERP updates available to you with your package.

Reserved for automatic project keywords: How many SERP update queries are actually used for all your Onpage projects per month. The number of queries depends on the crawling of your personal keyword set.

SERP requests requested by the user: Number of SERP updates that were manually requested. This concerns the updating of keywords in lists or tables, for example.

Remaining SERP requests: Number of SERP updates that are still available for the current billing period.

Learn more about the background in this article.

SERP Requests: Detailed information

A SERP request is defined as any update of the search results for a keyword that has been carried out for you as a user. The number of SERP requests varies depending on the package purchased.

Here you will find an overview of the SERP requests that have been carried out for your account in the current month.

Manual: The number of SERP updates requested by the user. This concerns the update of keywords in lists or tables, for example.

Automatic: The number of SERP update requests that were carried out automatically.

Total usage: The sum of manual and automatic SERP requests.

SERP Updates: Projects

A SERP request is defined as any update of the search results for a keyword that has been carried out for you as a user. The number of SERP requests varies depending on the package purchased.

Here you get a detailed overview of the SERP updates used in your account.

- Keyword Count: Number of keywords updated in the project.

- Daily / Weekly / Monthly: Number of SERP updates calculated on a daily, weekly or monthly basis. We calculate 31 SERP updates for daily requests, five for weekly requests and one for monthly requests.

- Total Credits: Total number of SERP updates performed for you in the current billing period.

The last line of the table sums up all values.

Crawl Usage

Each month, you can crawl a specific number of pages across all of your Onpage projects. This number varies according to the package bought.

In this box, you can find an overview of your crawl requests for the current billing period.

More specifically:

- The number of page crawl requests available for your package.

- The number of page crawl requests that you are actually planning to use across all of your projects.

- The number of page crawl requests that you already used.

- The number of page crawl requests still available.

Crawl History

“Crawl History” shows you an overview of your projects’ crawls and of the number of URLs left for the month:

Crawled: Date and time of the crawling.

Project: Name of the project which has been crawled.

Triggered: Shows if the crawling has been automatically or manually activated.

Credits before: Number of URLs which could be crawled before this specific crawling.

Credits used: Number of URLs crawled during this specific project.

Credits after: Number of URLs left after the crawling of this project and which can be used for the next project’s crawling.

Finish reason: Shows if the crawling was completed regularly, if it was interrupted or if the number of URL credits was not sufficient to finish it.