The SISTRIX Toolbox gives you the possibility to analyse a URL exactly as you would do with a domain, discovering its visibility and rankings on Google both for mobile and desktop devices. In this post we’ll show you the different elements of this section.

General Options

At the top right corner of the page you’ll find general settings for the whole page, in particular:

- Date: if you don’t choose a date, the Toolbox will show the data for the current week. Thanks to this option you’ll be able to go back in time and find out how the keywords and the rankings of the website developed over the years.

- Filter: The “Expert filter” allows you to create complex filter combinations, which you can also save and load.

- Data source: the Toolbox offers an extended database for mobile SERPs, which is why this is the default option for the table.

- Export: export the table as a CSV file. To do this you’ll need to use some credits.

- Shortlink: share the page with other Toolbox users. You’ll get a personalised shortlink, active for a few days, that you can share without any limitations.

Finally, the cogwheel icon of the table will let you export the data, or add them in a dashboard or a report. Here you’ll also find the function “Select columns” which allows you to add more interesting columns to the table.

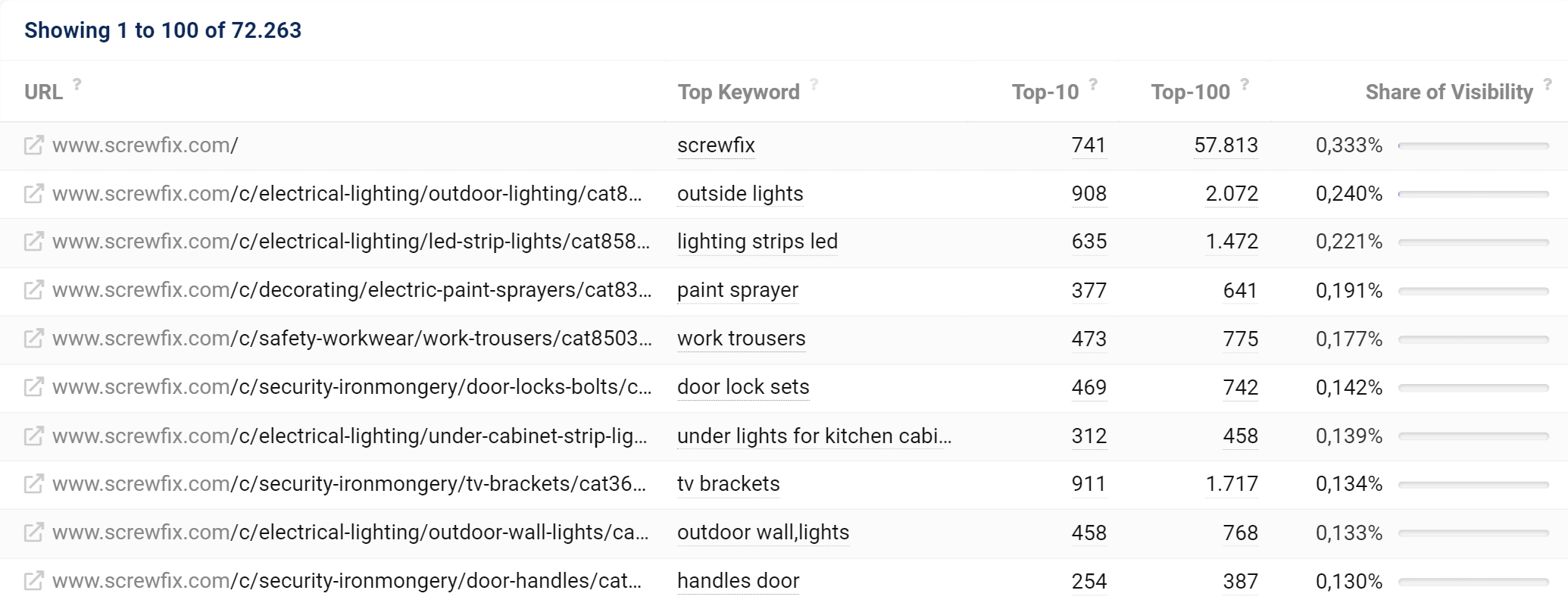

Table of Ranking URLs

This table holds the different URLs for which the domain (or the host, directory or URL) you are evaluating has rankings within the Top-10 and Top-100 results on Google. Here you’ll also find the best keyword for the respective URL. We consider the best keyword to be the keyword that generates the most organic clicks for this URL.

In the column “Share of Visibility” we show you how each URL contributes to the total visibility of the domain. The value is based on an evaluation of all ranking keywords in the extended database. By using the extended database we can provide more information for domains that have a small Visibility Index.

By default, the table is sorted by the share of visibility.

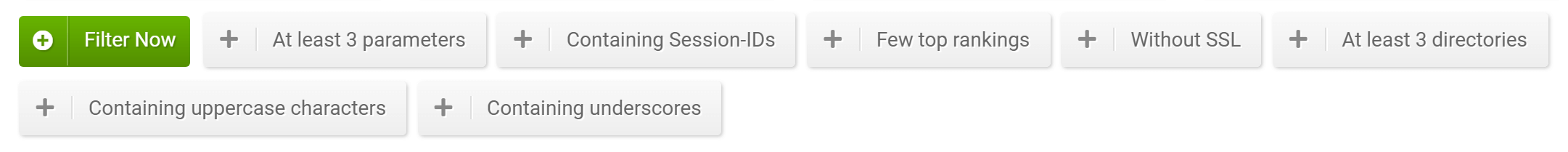

Filters

The filters allow you to further refine your list of URLs and eventually discover features that could be optimized.

Some filters are immediately available on the interface (Quick Filters), while others can be selected by clicking on the green button “Filter now“.

Here’s a quick explanation of every avaliable filter for the URLs:

- URL: finds the URLs that contain (or not contain) a text. For example, the quick filter “Contains underscores” lets you quickly find all the URLs with this feature, which is particularly important because Google recommends using hyphens instead of underscores in URLs.

- Number of parameters: filters the URLs according to a specific number of parameters. Note that URLs which have more than 3 parameters are to avoid, because they are extremely vulnerable for Duplicate Content problems: that’s why we created the quick filter “At least 3 parameters“.

- Number of directories: filters the URLs according to a specific number of directories. Thanks to the quick filter “At least 3 directories” you can find those URLs that are deep in the website architecture, which run the risk not to get crawled as often by Google (or at all) as URLs higher up in the structure.

- Top-10 ratio: indicates how many Top-100 keywords are also ranking in Google’s first result page. The quick filter “Few top rankings“, for example, shows you all those URLs that are ranking on the first organic page with less than 3% of their Top-100 keywords: you might want to check if you want these URLs in Google’s index.

- Containing Session IDs: filters the URLs that contain (or not contain) Session IDs. Note that Session IDs can easily cause Duplicate Content problems, so it could be a good idea to check why your system is providing Session IDs to users and Googlebot.

- Containing uppercase characters: shows only the URLs which have uppercase characters. It is always better to avoid using uppercase characters in URLs, as upper and lower-case letters are technically different URLs which may get you in trouble when it comes to setting up 301 redirects.

- SSL encryption: shows the URLs that use (or don’t use) HTTPS protocol: this is particularly useful for those URLs that are still not secure (quick filter: “Without SSL“).